From Pilot to Production: An AI Adoption Roadmap for Banking & Enterprise IT (Governance, Vendor Risk, and Measurable ROI in 90 Days)

2026-04-27

Set 90-day priorities and choose the right pilots

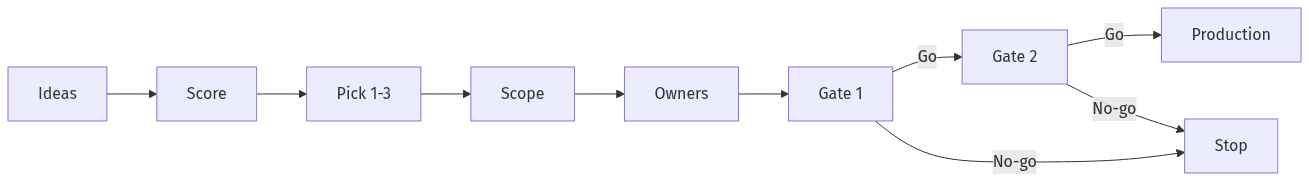

90-day pilot flow with clear decision gates

90-day pilot flow with clear decision gates

Across banking and enterprise IT, AI programs often stall when early pilots look encouraging but lack a 90-day decision structure that reconciles value, control readiness, and organizational ownership. A workable AI adoption roadmap in these environments usually narrows scope to a small set of pilots that can clear security review, procurement diligence, and audit expectations without drifting into open-ended experimentation. Executive confidence typically increases when outcomes are defined, dependencies are explicit, and decision points separate “learning” from “production intent,” building momentum while limiting exposure and accumulating sunk cost.

Select and prioritize use cases

Use-case prioritization in regulated environments typically centers on repeatable dimensions: measurable value, data sensitivity, integration complexity, and control readiness. A consistent scoring approach reduces circular debate and prevents backlogs driven by novelty rather than feasibility. It also creates a traceable rationale for why certain generative AI use cases advance while others remain constrained by privacy, confidentiality, or model risk management expectations.

Define scope, owners, and go/no-go gates

Pilot-to-production friction often reflects unclear accountability and missing decision gates. Ownership across IT, security, and risk functions indicates that a pilot is intended to stand up to operational scrutiny rather than remain a demonstration, while explicit go/no-go criteria reduce repeated extensions. A 90-day horizon tends to hold when scope stays narrow enough for review cycles and evidence collection without diluting the underlying business outcome.

Align teams with simple rules and accountability

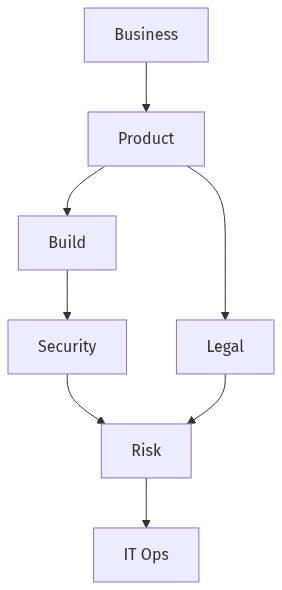

Simple role map from build to run

Simple role map from build to run

AI delivery in banks often slows when governance is treated as a parallel bureaucracy rather than a shared operating model. More durable programs express governance as a small set of decision rights, approval expectations, and separation-of-duties boundaries that guide work without constant exceptions. Alignment across CIO/CTO organizations, security, compliance, and procurement reduces rework, because the same issues—data handling, model behavior limits, logging, and third-party exposure—recur across use cases. Simpler governance reduces cycle time and avoids fragmented accountability.

Decision-making and approvals

Approval pathways for generative AI often become opaque when stakeholder roles overlap or when “review” lacks defined decision authority. Clear decision structures reduce time spent in escalation loops and prevent late-stage objections that surface after delivery investment. Executive stakeholders generally prioritize predictability and traceability, particularly when regulatory scrutiny and audit review can frame a technical change as a material risk event.

Ownership from build to run

Operational ownership frequently becomes the blocker when pilots show value but lack an agreed transition into support, monitoring, and incident response. Separation of duties across build, approval, and run functions remains a standard expectation in banking, particularly for systems affecting customers, employees, or code changes. Defined operational accountability also strengthens audit narratives by linking controls, oversight, and remediation responsibility to named roles.

Meet banking-grade readiness expectations

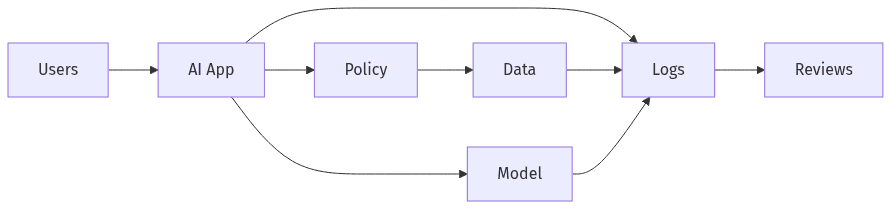

Guardrails and records for production readiness

Guardrails and records for production readiness

Production readiness for AI in regulated enterprise IT usually extends beyond functional correctness into a control posture aligned with confidentiality, integrity, availability, and auditability. Generative AI raises that bar because the risk surface includes data exposure, unintended content generation, and opaque third-party dependencies. Banking-grade readiness expectations often converge on security guardrails, privacy principles aligned to regimes such as GDPR, and evidentiary records appropriate for internal audit and supervisory review. Programs that treat readiness as an oversight and evidence problem—not only a technical one—typically clear gates with fewer rework cycles.

Prevent data exposure and misuse

Data leakage concerns frequently dominate executive risk discussions, especially when LLM usage intersects with customer information, proprietary code, or regulated records. Enterprise readiness commonly includes alignment to data classification, policy-enforced access constraints, and loss-prevention expectations intended to limit accidental disclosure. Reliance on “prompt-only” safety is often viewed as inadequate because it provides limited enforcement and inconsistent exception handling.

Ensure visibility and accountability

Auditability expectations often translate into persistent records: model and vendor decisions, control mappings, approvals, and operational logs that support investigation and oversight. Observability matters because many AI issues present as quality drift, data mismatch, or behavioral changes rather than clear outages. Evidence-by-design reduces late-stage documentation churn and makes risk reviews more consistent across use cases, teams, and vendors.

Streamline vendor and model risk review

Vendor adoption can accelerate AI capability, but it also introduces third-party risk and model opacity that procurement and risk teams must reconcile. In banking, friction often comes from duplicated questionnaires and inconsistent review standards across security, compliance, legal, and model risk management. A unified view of third-party responsibilities, data handling, and model limitations reduces contradictory conclusions and cuts rework. Executive governance is easier to maintain when vendor narratives and internal model risk expectations align into procurement-ready evidence.

Third-party review essentials

Third-party review usually focuses on reliability, privacy posture, and contractual clarity around data usage, retention, and breach obligations, with SOC 2 artifacts often used as directional inputs. Exit options and lock-in exposure remain relevant because AI vendors can become embedded in workflows quickly. Procurement readiness improves when documentation is complete, consistent, and reusable rather than reconstructed for each stakeholder group.

Internal review expectations

Internal model risk expectations typically emphasize limits of use, oversight mechanisms, and approval traceability, especially when outputs influence decisions or regulated communications. Documenting intended purpose, constraints, and known limitations supports defensibility when outcomes are questioned by audit or compliance stakeholders. Consistent mapping to internal policies reduces interpretation drift and makes approvals less dependent on individual reviewers.

Prove ROI and package deliverables for scale

AI programs that reach production in banks typically pair risk readiness with an ROI narrative that holds under executive scrutiny and budget governance. Near-term value becomes believable when baselines exist, KPIs reflect operational outcomes rather than novelty, and reporting stays consistent enough to support go/no-go decisions. A 90-day window increases the need for measurable signals because extended timelines can be interpreted as ongoing experimentation. Reusable documentation also supports scale, since procurement and audit cycles often penalize net-new artifact creation.

ROI metrics and reporting cadence

ROI discussions generally become decision-grade when metrics tie to baseline performance and are tracked on a regular cadence rather than through sporadic updates. Vanity outcomes such as general “user satisfaction” tend to carry less weight than efficiency movement, reduced manual rework, fewer exceptions, or faster cycle times that can be explained and verified. Consistent reporting also strengthens governance by making tradeoffs visible across security, risk, and delivery priorities.

Reusable delivery documentation

Scale often depends on an artifact pack that procurement and control functions recognize: control mappings, risk summaries, vendor evidence, and production readiness records reused across similar deployments. Reuse reduces cycle-time pressure in procurement and increases consistency of oversight. Shared evidence also limits reinvention, a recurring reason pilots stall before broader rollout.