AI Adoption for eCommerce Customer Service: Vendor Scorecard + Architecture Pattern for Order Status, Returns, and Fraud-Safe Account Changes

2026-05-14

Identify customer-service risks for orders, returns, and account changes

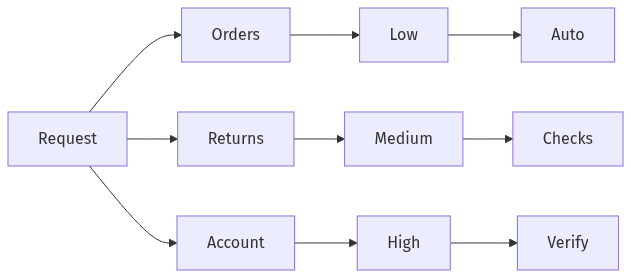

Risk tiers across orders, returns, and account changes

Risk tiers across orders, returns, and account changes

eCommerce customer service concentrates volume and risk into a small set of workflows: order status, returns, and account changes. LLM-enabled automation moves these workflows from agent discretion to software-mediated decisions, increasing the importance of explicit risk boundaries. Order status is typically inquiry-heavy and operationally repetitive; returns introduce fraud pressure and policy interpretation; account edits carry the highest stakes because address, email, and payment changes intersect with account takeover. Security and customer experience goals often diverge when all three workflows receive the same automation treatment.

Know what customer data is involved

Support interactions routinely include email addresses, phone numbers, shipping addresses, order numbers, tracking links, and free-text descriptions that sometimes contain payment-adjacent details. PII exposure risk rises when tickets, chat transcripts, and CRM notes flow into prompts or logs without tight data minimization and access boundaries. In many support operations, the practical privacy posture depends less on model choice than on how data scope is constrained across intake, retrieval, and retention.

Separate low-risk questions from high-risk changes

Customer requests fall on a spectrum from informational questions to irreversible account actions. WISMO-style inquiries usually carry lower fraud impact, while refunds, reshipments, and account edits concentrate financial loss and reputational risk. In many eCommerce queues, social-engineering attempts and automation quality failures cluster around high-impact outcomes rather than routine status explanations.

Choose vendors with a simple security and fit scorecard

The vendor landscape spans CX suites, "LLM wrappers," agent platforms, and standalone chatbots, and surface-level feature overlap often obscures differences in control maturity. A scorecard frames whether a product’s design matches eCommerce threat realities, particularly around identity, permissions, and auditability. Common misconceptions—such as assuming redaction alone prevents leakage, or treating SOC 2 as a complete safety signal—can bias selection toward speed while importing avoidable exposure.

Safety and privacy requirements

Security posture is best assessed through concrete controls: least-privilege enforcement, retrieval permissions, isolation boundaries, and predictable data-handling commitments. Vendors that hold up under scrutiny tend to be specific about retention controls, logging scope, and policy enforcement mechanisms rather than relying on generic “enterprise security” positioning. Compliance considerations often include CCPA/CPRA expectations for consumer data and PCI DSS adjacency when support conversations drift into payment context.

Integration and day-to-day operations fit

Operational fit frequently determines whether controls stay intact in day-to-day support. Systems that map cleanly to existing ticketing, CRM, and order-management identifiers reduce workarounds, duplicated identities, and mismatched customer records. Administrative capabilities—such as approvals, role-based access patterns, and audit-ready reporting—often separate pilots that perform well in demos from deployments that remain defensible under fraud pressure.

Set clear limits on what AI can see and do

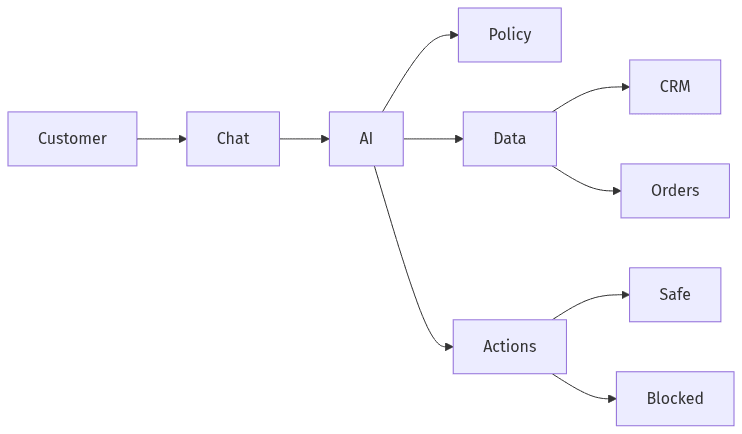

Bounded AI access: minimum data and controlled actions

Bounded AI access: minimum data and controlled actions

LLM customer support risk tends to concentrate in two channels: information exposure and unauthorized action. More resilient operating models treat the LLM as one component within a controlled perimeter, not as a privileged operator. Retrieval and tool/function calling expand capability, but they also expand blast radius when prompts include prompt-injection content or when outputs drift into policy-violating statements. Consistent least-privilege constraints generally correlate with fewer high-severity incidents and a more defensible compliance posture.

Limit information to the minimum needed

Data minimization often outperforms “sanitize everything” because support quality rarely requires full customer-record dumps. Retrieval that narrows results to the smallest relevant fields reduces accidental PII leakage and lowers the chance that unrelated sensitive data appears in outputs. Across RAG deployments, over-broad retrieval is a recurring source of privacy exposure even when responses read as accurate.

Restrict actions to safe, approved changes

Tool permissions are a recurring failure point in agentic support because a model that can initiate account edits or refunds effectively becomes a high-privilege actor. Strong control models keep actions narrowly scoped with explicit authorization boundaries and auditable decision traces. Server-side enforcement matters because policy compliance becomes brittle when it relies on prompt phrasing or conversational guardrails alone.

Add extra protection for refunds and account changes

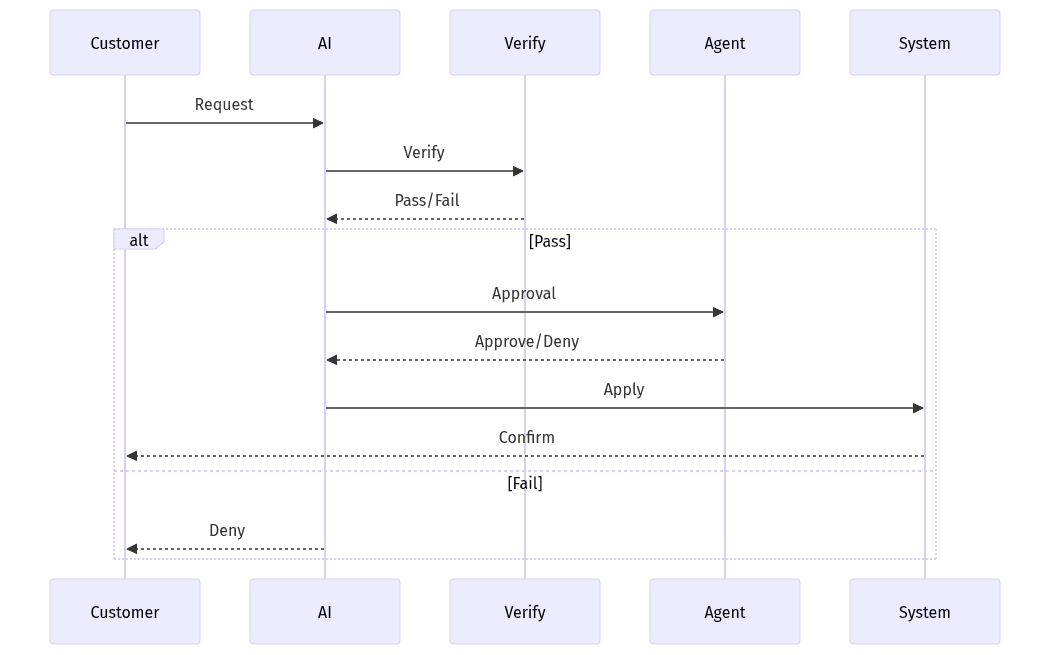

Verification and approvals for high-impact requests

Verification and approvals for high-impact requests

Refunds and account edits combine direct financial exposure with attacker motivation, and they often attract social engineering that exploits customer-service empathy norms. LLMs increase throughput, which can increase loss velocity when controls lag behind expanded automation scope. Fraud-resistant designs typically treat refund authority and identity authority as separate concerns, each requiring additional friction and stronger logging. Executive risk tolerance often depends on whether these workflows remain bounded and reviewable after automation.

Require stronger verification for sensitive edits

Account changes such as address, email, and payment updates are a common pathway for account takeover and downstream chargebacks. Higher-assurance expectations tend to apply to these edits, especially when requests diverge from prior customer behavior or originate from higher-risk locations. In many fraud programs, geographic and behavioral risk signals carry more operational value than conversational fluency.

Use approvals for refunds and high-value returns

Approval gates often function as fraud control and quality control when policies include nuance and exceptions. High-value refunds and ambiguous return claims frequently warrant human confirmation because model outputs can reflect incorrect or invented policy interpretations. Consistent audit trails for approvals and denials often determine whether post-incident analysis is feasible and whether loss teams can validate root causes.

Run a 30-day pilot and decide based on safety and outcomes

A short pilot window often shows whether automation reduces workload without shifting cost into risk management and rework. Thirty days commonly provides enough interaction volume to surface failure modes around sensitive data handling, policy drift, and jailbreak-style attempts while keeping exposure bounded. Decision-grade evaluation ties customer experience outcomes to security outcomes, since deflection and AHT gains can coexist with rising fraud pressure. A production rollout case typically depends on evidence that controls remain stable under real adversarial conditions.

Keep the pilot tight and low-risk

Low-risk intents tend to dominate early value because order status and basic policy questions map to constrained answers with limited customer-data exposure. A tight scope reduces the chance that perceived success depends on untracked manual intervention. Human-in-the-loop coverage often stabilizes pilots by containing edge cases while surface areas and permissions are still being exercised under load.

Track performance and safety results together

Evaluation is more reliable when operational metrics like deflection, AHT, and CSAT are reviewed alongside safety signals such as PII exposure indicators, policy-violation rates, suspicious tool-call attempts, and approval override frequency. A go/no-go posture is easier to defend when these measures appear in a single rubric rather than disconnected reporting streams. Monitoring and incident response readiness often matter as much as baseline answer quality.