HIPAA-Safe LLM Automation for Patient Intake & Prior Auth in Medical SaaS: Vendor Selection, Architecture Patterns, and a 30-Day Pilot Plan

2026-05-07

Assess whether intake and prior auth fit LLM automation

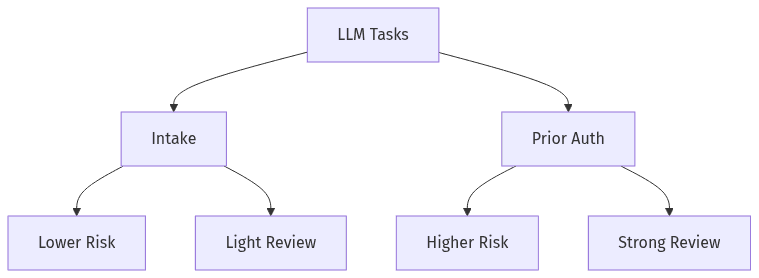

Use-case fit by risk and review needs

Use-case fit by risk and review needs

Patient intake and prior authorization often emerge as viable candidates for HIPAA-safe LLM automation in medical SaaS because both concentrate administrative text, documents, and repeatable determinations. Fit depends less on raw model capability and more on risk posture, error tolerance, and the availability of stable source-of-truth data. Intake generally carries lower downstream harm and more straightforward review pathways, while prior auth introduces payer-specific rules, denial risk, and defensibility expectations. Early executive alignment on limits tends to reduce later friction across compliance review, security review, and product accountability.

Pick the right use cases

The strongest-fit patterns typically cluster around extraction, classification, summarization, and draft generation in administrative workflows. Intake commonly focuses on normalizing forms and inbound documents into structured fields, while prior auth concentrates on drafting narratives and organizing supporting evidence. The operational distinction is consequence: prior auth errors more often translate into denials, delays, and rework than intake misclassification.

Set clear boundaries and expectations

LLM automation in regulated workflows tends to hold up when expectations are framed as assistance rather than autonomous decisioning. Boundary clarity usually separates draft creation from final determinations and distinguishes administrative support from clinical judgment. Overclaims such as “HIPAA compliant by default” add avoidable risk, since responsibility still spans internal controls, configuration choices, and downstream handling.

Choose vendors that support HIPAA needs

Vendor selection often becomes the gating factor for medical SaaS teams because HIPAA requirements extend beyond technical controls into contracting terms, permitted data use, and incident responsibilities. A practical shortlist typically reflects BAA availability, retention and training policies, subprocessor exposure, and the ability to constrain where sensitive information traverses. Vendor-neutral evaluation supports executive governance because lock-in risk and shifting model roadmaps can extend beyond a single product cycle. Procurement discussions often move faster when requirements are framed around PHI handling outcomes rather than brand preference.

Contract and data-handling requirements

A BAA typically serves as a baseline rather than a guarantee, because material risk frequently sits in retention, secondary use, and subprocessor access. Contract terms often determine whether prompts or outputs become stored artifacts and whether data can be used for training. Incident notification timelines and responsibility boundaries can shape exposure as much as encryption or access controls.

Security and operational fit

Operational fit often reflects access isolation, reliability, quotas, and the ability to support compliance evidence without unnecessary sharing of sensitive content. Cost predictability becomes material in intake and prior auth volumes, where fluctuations can be large. Executive teams often prefer approaches that preserve an exit option, since vendor policy changes can shift acceptable PHI risk without any corresponding product change.

Reduce PHI exposure and keep automation safe

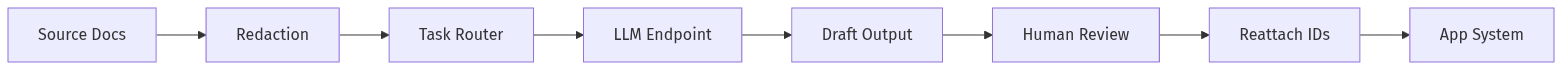

Minimize PHI before LLM, then reattach only when needed

Minimize PHI before LLM, then reattach only when needed

PHI exposure remains the central risk in HIPAA-safe LLM automation for medical SaaS, particularly when prompts, logs, or vendor retention end up functioning as an additional system of record. A safer posture typically centers on data minimization, careful routing to BAA-capable endpoints, and separation between identifiers and the text required for administrative tasks. Safety also depends on quality controls, since hallucinated details in prior auth can trigger denials and downstream rework. Human-in-the-loop review often functions as the governance layer that reconciles speed with defensible outputs.

Minimize PHI in requests and records

Minimization typically emphasizes removing direct identifiers and limiting context to what the task requires, because redaction quality varies and “equivalent” substitutions often fail in edge cases. Prompt content is an underappreciated PHI surface area, particularly when stored for debugging or analytics. A conservative posture treats prompts and outputs as sensitive unless retention and access boundaries are explicit and enforceable.

Add human review for higher-risk outcomes

Prior authorization tends to carry higher risk because payer requirements, medical-necessity framing, and documentation completeness directly affect outcomes and cost. Human review commonly acts as a control for low-confidence outputs, ambiguous cases, and policy-sensitive language. A more defensible operating model reserves final submission or decision representation for authorized staff, with automation limited to drafting and evidence organization.

Ensure visibility without exposing sensitive data

Auditability in AI-assisted workflows often becomes the practical definition of “safe” for executives, since investigations and compliance reviews rely on traceability. The challenge is capturing enough detail to explain what occurred without storing raw PHI in observability systems. Effective visibility typically reflects disciplined metadata, strict retention boundaries, and role-based access to sensitive artifacts. Monitoring also extends beyond uptime into unusual access patterns and anomalous usage, since AI endpoints can become high-value targets. HIPAA expectations commonly align with demonstrable administrative and technical safeguards rather than blanket claims of compliance.

Decide what to record

Useful records often include who initiated a request, which system component handled it, which vendor endpoint was used, what policy version governed the interaction, and the disposition of any human review. The highest-risk logging pattern is storing full prompts, raw documents, or unredacted outputs in centralized logs. Traceability improves when metadata substitutes for content wherever possible.

Monitor access and unusual activity

Access monitoring often matters as much as model accuracy, because these workflows concentrate valuable administrative and patient context. Role-based access control and strong authentication reduce routine exposure, while anomaly detection supports quicker recognition of misuse or compromised credentials. Security Rule alignment often depends on demonstrable controls, consistent access reviews, and monitoring evidence rather than assumptions about vendor security.

Run a focused 30-day pilot with clear success gates

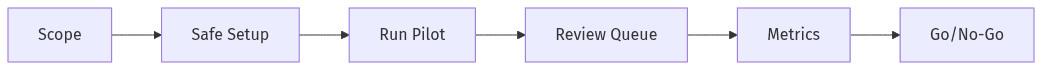

Pilot gates connect scope, review, and go/no-go

Pilot gates connect scope, review, and go/no-go

Pilot velocity often determines whether LLM automation becomes a funded program or remains an experiment, particularly in organizations with long security review timelines. A 30-day pilot is more likely to hold up when scope stays narrow, PHI exposure is tightly constrained, and evaluation criteria are set before results arrive. Executive stakeholders typically look for evidence that automation reduces administrative burden without increasing compliance risk or denial rates. Parallel progress on security artifacts and procurement constraints often differentiates pilots that transition to production from those that stall. Clear go/no-go gates reduce ambiguity and internal drift.

Pilot scope and timeline

A short pilot usually centers on a limited set of intake and prior auth sub-tasks with measurable throughput and a controlled reviewer pool. Time-to-pilot often improves with early alignment on required security evidence, since procurement and compliance reviews can dominate the calendar. Pilot credibility increases when human-review coverage and PHI minimization remain consistent throughout the evaluation window.

Success metrics and go/no-go criteria

Meaningful success gates usually combine quality, operational speed, and cost, because movement in only one dimension can mask risk in another. Common measures include accuracy against labeled outcomes, cycle-time changes, exception and escalation rates, and reviewer burden. Go/no-go decisions often hinge on whether error patterns are bounded and explainable, and whether audit readiness exists without expanding the PHI storage footprint.