AI Call Summaries + CRM Auto-Updates for US Professional Services: An Implementation Guide with Security, Consent, and ROI Benchmarks

2026-05-03

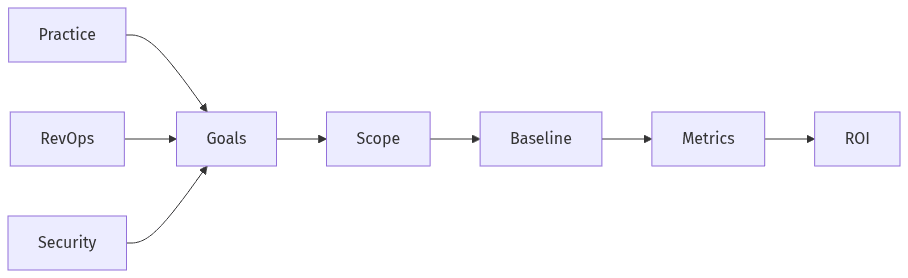

Set goals, scope, and success metrics

Align outcomes, scope, and metrics across teams

Align outcomes, scope, and metrics across teams

In US professional services, AI call summaries and CRM auto-updates tend to succeed less on model capability than on explicit agreement around scope, intended outcomes, and what counts as evidence. Executive stakeholders often converge on a narrow set of outcomes—reduced administrative time, consistent next steps, and more reliable pipeline visibility—while underweighting the cross-functional dependencies that drive adoption. A shared definition of “done” typically reduces tension between practice leaders optimizing for speed, RevOps optimizing for data integrity, and security teams optimizing for controlled handling of recorded client communications and downstream CRM changes.

Use cases and CRM scope

Well-scoped deployments usually separate “capture” from “commit.” Many organizations prefer read-oriented logging of summaries and action items, while others allow writeback into Salesforce or HubSpot objects. Scope clarity often turns on which interactions qualify, what is summarized, and which records and fields can change without increasing duplicate creation or degrading confidence in the system of record.

Baselines and ROI targets

ROI discussions tend to hold up only when anchored to pre-rollout baselines: activity logging rates, frequency of missing next steps, time spent on manual notes, and downstream data quality indicators. Professional services leaders also map value to relationship continuity, where consistent follow-ups and intact timelines affect client confidence in ways that extend beyond administrative effort.

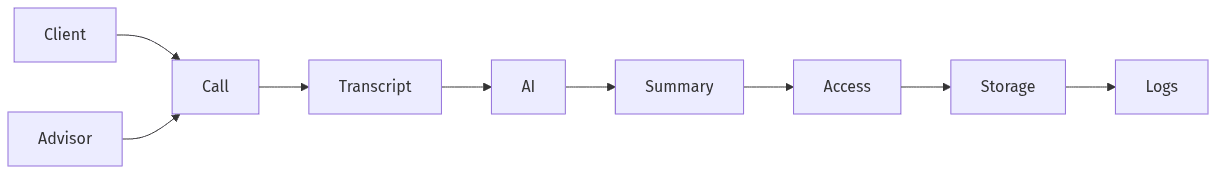

Choose a secure approach for AI and data

Secure flow from call to summary and storage

Secure flow from call to summary and storage

Security posture often determines time-to-value for AI meeting tools in consulting and advisory environments. SOC 2 alignment frequently appears in vendor materials, yet executive review typically treats it as a baseline signal rather than evidence of fit, especially when sensitive client information enters transcripts and summaries. Internal security teams tend to scrutinize the full data lifecycle—capture, processing, storage, and exposure pathways—to limit inadvertent disclosure to external model endpoints and to maintain defensible controls for audits and client inquiries.

Model and hosting choice

Model and hosting decisions often reflect client confidentiality posture more than technical preference. Private or hosted approaches tend to align with conservative expectations around handling client information, while public LLM use raises questions about retention, secondary use, and boundary controls. Executive risk tolerance often changes when summaries move from passive notes to structured CRM updates that affect forecasting and documented client commitments.

Access and protection standards

Access limitations and protection standards generally map to familiar SOC 2-style expectations: least-privilege access, encryption, audit logs, and clear vendor due diligence. OWASP LLM risk language increasingly appears in these reviews, largely because meeting content can include confidential or regulated information with disproportionate impact if exposed, retained unexpectedly, or misused.

Operationalize consent, disclosure, and retention

Consent and disclosure practices often prove to be the most underestimated adoption determinant for AI call summaries in the US. Recording norms vary by client relationship, and two-party consent expectations in certain jurisdictions raise sensitivity around disclosures even when calls feel routine. Retention choices also shape stakeholder comfort: longer retention improves searchability and continuity, while increasing exposure surface, discovery risk, and client concern. Governance language therefore often becomes a commercial constraint rather than a back-office compliance detail.

Consent and disclosure scripts

Consistent disclosure language typically reduces uncertainty for employees and clients, particularly when AI-generated summaries are involved. Legal review remains a common dependency, since recording requirements and disclosure expectations vary by jurisdiction. In professional services, tone and precision matter because disclosure becomes part of the client experience as well as a risk control.

Retention, access, and deletion requests

Retention, access, and deletion expectations often determine whether a solution can clear internal security review and client scrutiny. Transparency into who can view transcripts and summaries often carries as much weight as model choice. Deletion and removal requests become operationally material once meeting content is treated as an enterprise record rather than temporary collaboration data.

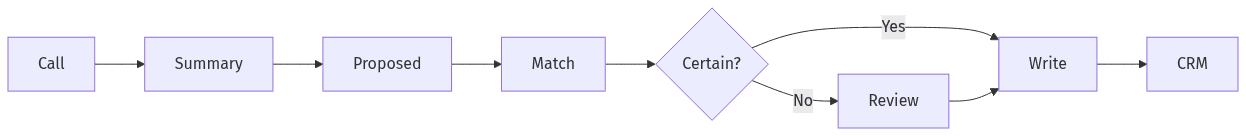

Update the CRM safely and accurately

Review gates reduce wrong CRM updates

Review gates reduce wrong CRM updates

CRM writeback introduces a distinct risk category: incorrect information becomes durable, reportable, and widely propagated. Executive skepticism often concentrates on hallucinations, misattributions, and subtle inaccuracies that read as plausible. Duplicate record creation compounds the issue by degrading pipeline hygiene and weakening confidence in reporting. Writeback governance therefore often outranks summary readability, since even well-written summaries can cause downstream problems when the wrong object, field, or record association is updated.

CRM update rules and approved fields

Field boundaries typically separate low-risk updates from higher-risk changes. Notes and task creation are commonly treated as safer than edits to core opportunity fields, while approved-field approaches reduce ambiguity across Salesforce and HubSpot configurations. Professional services teams often prioritize next steps, stakeholders, and follow-up commitments because those elements affect continuity and delivery expectations.

Review and exception handling

Human approval gates commonly appear where uncertainty is highest, both to manage accuracy and to preserve user confidence in the record. Exception handling also tends to support auditability, particularly when summaries drive CRM changes. Audit trails often stabilize governance discussions by clarifying that autonomous writeback is a policy choice, not an enterprise-safe default.

Run a short pilot and prove ROI

Pilot expectations in BOFU evaluations often balance speed with evidence. Leaders typically look for early confirmation that notes become more consistent, records remain clean, and security concerns stay bounded. Adoption patterns usually surface quickly: when meeting capture or review feels intrusive, utilization drops; when CRM outcomes appear dependable, usage spreads through teams. Reporting often carries equal weight to technical outputs, since executives need to see time reclaimed, activity logging lift, and fewer missing next steps alongside a steady security and consent posture.

30-day pilot plan

Short pilots often concentrate on a single practice area to limit variability and compress the learning cycle. Early measurement usually focuses on activity logging rates, completeness of next steps, and the share of updates requiring review. Security and consent feedback in this window often predicts whether expansion will be delayed in governance review.

60-day expansion plan

Expansion periods typically emphasize repeatability and consistent reporting across teams rather than new capability. Common signals include sustained adoption, stable duplicate rates, and fewer exceptions requiring escalation. Change management becomes more visible at this stage, because consistent disclosure language and dependable CRM habits often matter as much as summarization quality.